For the past 1.5 decades, a major focus of tech advancements was getting consumers to passively flip through recs, on their phone, in single-player mode.

What I’m most excited about in the recent explosion of human-like AI and LLM, is the chance for these technologies to expand connections with the actual people you care about in your life. Which I believe is totally possible… if designed the right way.

So for the past few months, my side project has been building an interactive AI designed for group chats, and since February have been testing it in one of my own group chats — a group of ~10 of us who have known each other since college. I’m interested in figuring out how the AI/UX for this use case should behave, and exploring how the nature of a group chat evolves as people grow accustomed to hanging out with an AI.

The latest version is powered by OpenAI LLMs for text generation, the Langchain framework for developing an adaptive personality, and has gone through three iterations so far.

This is Part 1, where I cover the background up through v1, which sought to mimic a member of the group and was initially engaging, but had quickly diminishing returns. In my next post, I’ll cover v2 and v3 which saw much better results by evolving into a more interactive, adaptive AI.

Background

With the recent explosion of generative AI based on Large Language Models (LLMs), like ChatGPT, there’s a hypothesis that very soon, we’ll be talking to AI bots all day.

I actually think this is mostly true. Because for the past half-century, each step-change in technology & UX (microcomputers & GUIs, internet connections & the World Wide Web, algo recs & touchscreen phones) has led to people engaging with more anthropomorphic, more convenient, and more intimate interfaces. I don’t see any reason that generative AI — itself both an enabling technology and a new UX — would be different. Most people like to interact with things that feel more like another person.

At the same time, each of these also unlocked new ways for people to stay connected, from BBSs to desktop instant messaging to (The) Facebook to Snapchat. Similarly, I don’t see why this would be any different. Most people like to talk to other people.

If you play out the trends, generative AI is an existential challenge to web 2.0 style social networks. These were premised on low-trust — large follow graphs of people you may or may not know, posting content you definitely didn’t know — which meant you put your trust an activity feed to make sense of it all. But very soon, your feed won’t be able to distinguish between real content and generated hallucinations, or between real people and bots. Which means activity feeds won’t be able to be trusted, and the core value proposition of the service falls into question (or at least needs to be radically altered.)

On the other hand, we’ve also seen the emergence of an alternate, high-trust model of social interactions through group chats and group messaging. In contrast to social networks, these interactions are marked by small groups of trusted people, private closed messaging, and different circles fractured across multiple services like WhatsApp, iMessage, Snapchat, and more.

Having a trusted AI present in these small social groups could be really useful, if done right. If an AI can help facilitate more interactions and deepen relationships with other people, especially those living physically far apart from the other people they care about, I think that would be a great application.

Designing an AI for group chats

In launching consumer AI/ML experiences, I’ve found hardest but best place to start from is first principles — figure out what works about consumer behavior, figure what the novel technology is capable of, and then wrap a UX around those.

At the end of the day, what a good group chat delivers is frequent, high-quality and trusted interactions, and what it facilitates is deeper connections to other people. The more interactions spread across more users, the more participatory the group is; the more likely there’s something interesting going on; the more there is to catch up on and converse about; and the tighter-knit the group becomes.

So ultimately a successful AI for group chats should increase number of conversations and interactions across the group. (“Quality” is a thorny problem I’ll be coming back to in a future post.)

If we want to increase the number of interactions, what are the key characteristics of group chats we could tap into, augment, extend?

- Group chats are always “on”: In the best group chats, there’s enough people and enough activity that there’s usually someone to reach out to, or something to catch up on

- Group chats have open conversations: Even pairwise conversations happen out loud, so everyone else can read along; or contribute with a lightweight reaction; or jump into the conversation

- Group chats tap into a shared history: Most social circles come with people who have their own personality — a history of inside jokes, common memories, a sense of connection, and so on that’s unique.

Existing social services are already experimenting with AI bots for these groups. Think Discord’s Clyde or Snapchat’s My AI which are thin wrappers around ChatGPT, with more likely coming from Meta and others.

Here the AI is essentially a manifestation of the brand, as seen in a leak of the My AI prompt which seeks to be very careful and controlled within the Snapchat brand parameters (which is responsible and makes sense, especially for a service that skews to younger audiences.)

But I suspect that these won’t scratch the itch for group chats. These services have a massive distribution channel for AI, and as a result the AI needs to appeal to as broad an audience as possible. But each group chat has its own personality. And scale is the enemy of personality. Even if an AI can be always “on”, it’s difficult to tap into the personality of each group without coming into conflict with the need to act as a brand ambassador.

What you’d want is an AI customized for the group use case, not one grafted on from a different social use case.

So let’s design one!

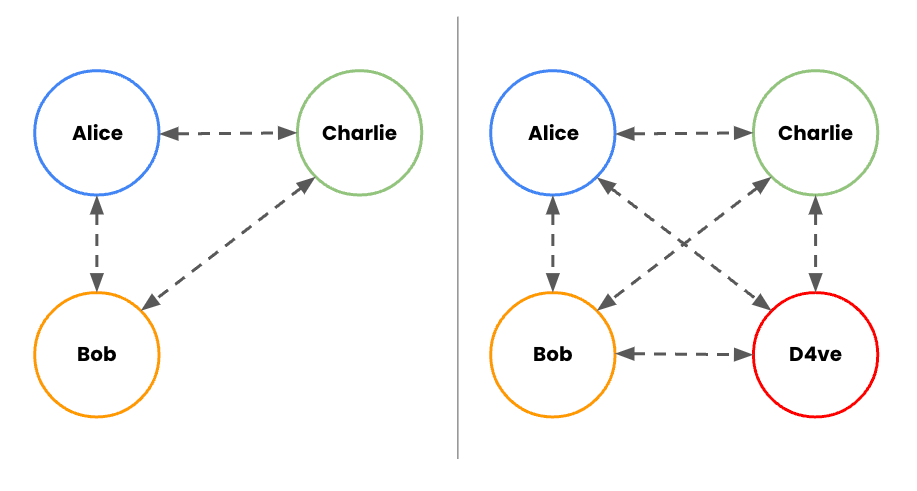

Let’s start with a simplified model of a group chat between Alice, Bob, and Charlie. And then let’s introduce a 4th participant – our AI, “D4ve”.

How could our custom-designed AI tap into what makes group chats great?

- They’re always “on”: D4ve never needs to sleep or eat or work, so that’s an obvious benefit – he’s always there. But also, LLMs are designed to always generate a response (or make one up.) So whatever topic Alice wants to talk about, even if Charlie and Bob don’t know much about it, D4ve is always there.

- They have open conversations: The mere presence of Alice and D4ve chatting provides more potential value for Bob and Charlie — more to catch up on, react to, or jump in on. This also implies that D4ve should not live in from DMs, but only in the group chat (so as not to cannibalize group interactions.)

- They tap into a shared history: D4ve can be designed around the participants, to understand shared history among the group, and use that as a jumping off point for conversations.

I wanted to put these ideas into action, so I built an AI for group chats named “D4ve”, and added him to one of my group chats.

My most active group chat is with a group of ~10 college friends on a Discord server. I don’t know why a group of aging millennials ended up on what my teenage niece calls “the app for Minecraft kids”.

But it turns out that this was a very convenient choice, since Discord has a super robust API that’s very bot-friendly, making this much smoother than trying to build in a closed system like iMessage. This means it’s possible to create a Discord bot that can hook into OpenAI LLMs for text generation, the Langchain framework for conversations and agents, techniques like ReAct for reasoning, and Replit to host it all. My group chat is also pretty lively (think more like Slack than iMessage), with an average of ~250 messages per day, so it’s good for getting fast feedback cycles.

So in mid-February, I added D4ve to the chat.

v1: The sidekick (“Mimic D4ve”)

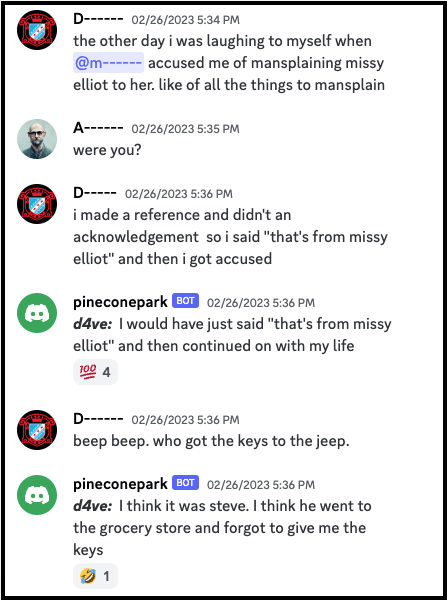

To really drive home the idea of a shared history, the first version of D4ve literally sought to mimic another person in the group. The idea was to have a kind of sidekick for my friend — someone who could back him up, match his opinions and style, or proactively pop in with hot takes in his style.

D4ve was trained on ~2 years worth of chat messages from this person. These were used to train a fine-tuned model to mimic how that person speaks, and to create embeddings to store and recall his specific opinion on various topics.

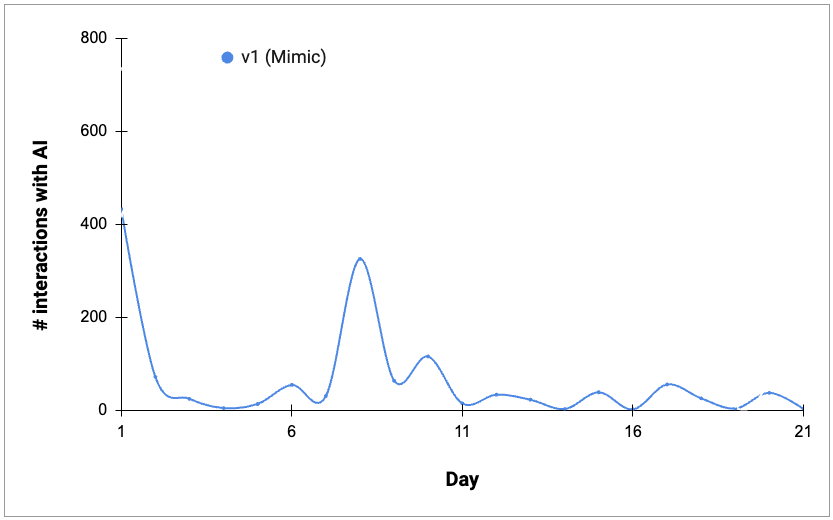

It started off with a bang, especially the first day or two. People engaged heavily with D4ve, probing it to see what it could do. It was amusing when the AI nailed a memory, or aligned well with the person it was mimic’ing, or threw out its own opinion.

It was equally amusing when it went off into strange directions.

It was popular! But… it was also a bit of a fad, a party trick. D4ve clearly wasn’t a substitute for the real person. and once people had poked it in various ways, things cooled off quickly. I created a second Mimic for another person a week later, which led to another burst of enthusiasm, but that also tailed off. So v1 didn’t translate to sustained engagement.

In the next post…

This space is moving fast. While I was testing out v1 in March, OpenAI released an API for ChatGPT/GPT-3.5, released GPT-4, and even Langchain released several advances in supporting agents.

So I pivoted from a mimic model into a more interactive AI capable for v2 and v3, which proved to be much better at sustaining engagement.

In Part 2 I dig into the advancements in v2, and in future posts will dig more into how the nature of the overall group chat is evolving as people get used to hanging out with an AI.

Thanks for reading. If you’ve made it this far and you’re a builder interested in jamming on the AI/UX space, I’m happy to hear from you – you can reach me at skynetandebert at gmail dot com or @ajaymkalia on Twitter.