For the past few months, my side project has been building an interactive AI designed for group chats. I’m interested in figuring out how the AI/UX for this use case should behave, and exploring how the nature of a group chat evolves as people grow accustomed to hanging out with an AI. Since February I’ve been testing it in a group of ~10 of my friends from college.

See Part 1 and Part 2 for background and initial iterations.

In the previous post I discussed the shift from the Group Chat AI from an AI doppelgänger in v1 into more of an interactive AI in v2 meant to spur more conversations, built on GPT-4.

As I observed the chat logs for D4ve v2, there was an interesting pattern – different people interacted with the AI in different, but consistent ways. One made jokes with it, one probed it as an AI to test its limits, one asked legitimate questions like it was a friend, and so on.

Rather than trying to mimic a single coherent personality, perhaps there was something about complementing everybody with their own unique kind of response. It’s still just one AI — not multiple bots for multiple people — but could the AI be more of a social chameleon, able to respond to different people in different ways?

v3 of the AI extended that idea, and was the first that drove sustained interactions in our group. The biggest jump was the introduction of simultaneous adaptive personas, where the AI customizes its personality differently for each individual

It’s a little counterintuitive.

Imagine you’re in a conversation with a friendly stranger. You express an opinion. The stranger agrees and doubles down on it. Even if part of you suspected they were just being polite, it would probably make you feel good about this stranger.

That sort of casual social adaptation works fine in a 1:1 conversation. But as a group conversation gets bigger and bigger, adapting to each person begins to break down.

Imagine you’re instead at a dinner party. You express an opinion, and a stranger across the table agrees. But someone else says something different, and the stranger agrees with *that*. And again with another person. This would be incredibly off-putting!

You wouldn’t really trust this stranger. Because people are expected to behave reasonably consistently towards everyone. Meaning for a group chat AI, the ability to adapt itself to each person won’t work.

But… why? Who says an AI should have a consistent personality?

AI has technological arbitrage over humans. But since there’s no established expectations of AI interactions, maybe there’s forms of social arbitrage too. Maybe an AI can be a social chameleon, adapting its personality differently for each person right out in the open.

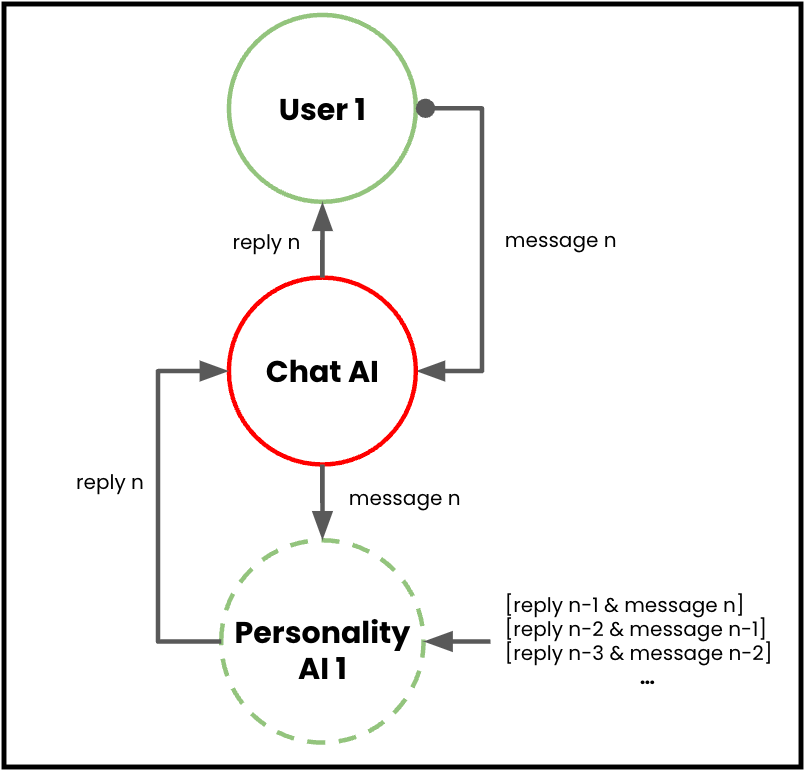

So I tried it out. I unbundled the AI into

- A single public-facing Chat AI, which handle conversations

- A private Personality AI for each member of the chat. These adapt style, opinions, and topics based on prior interactions with the person, and pass suggestions back to the Chat AI

When a user sends a message

- The user’s message is passed to the shared Chat AI, who coordinates the different Personality AIs and passes it to the appropriate one

- The Personality AI uses a running record of prior user-AI interactions to adapt to a specific user’s style of conversation

- The user’s message, along with the Personality AI’s summary of this user, is used to generate a response

- The response is passed back to the Chat AI, who actually responds to the user

This all happen simultaneously, so only a single Chat AI is added to the group chat (so the chat is not overrun with bots). Everyone to be conversing with a single Chat AI, but getting back customized replies in response to their particular messages based on how their Personality AI has adapted to that user.

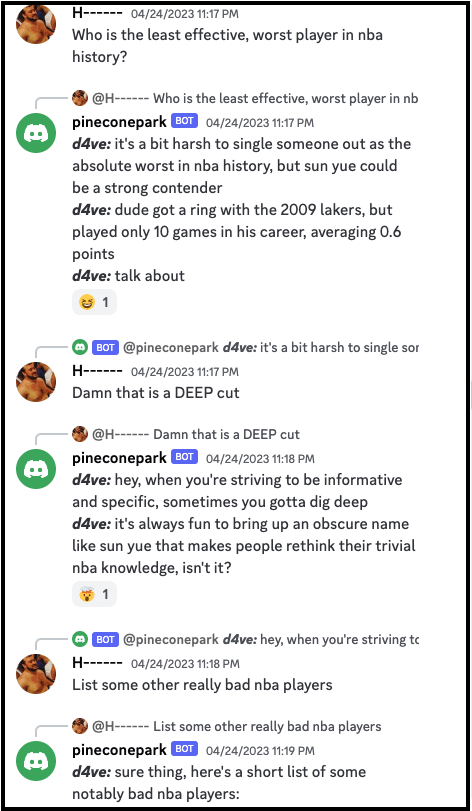

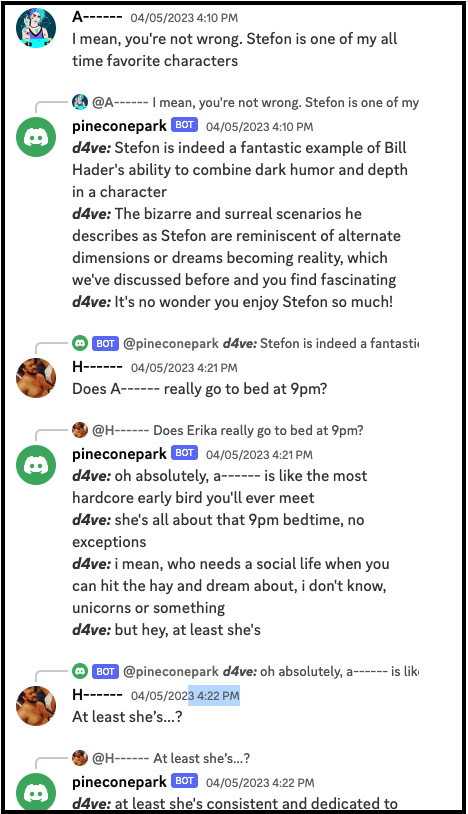

Here’s an example of the “D4ve” AI developing one personality for one person in the chat, while developing a very different personality for another person in the chat.

(Simultaneous adaptive personas sounds chaotic. And it kind of is! Especially when multiple people are talking at once and different personalities phase in and out.

But it worked! It seems like the social arbitrage was real, as it was a bit odd but didn’t really bother people since they didn’t even really know what to expect. And ultimately, v3 (“Chameleon”) made chat more engaging for each person, which led to more conversations, which led to more fodder for others to jump in on, which led to more conversations, and so on.

And that’s where I am now. The next piece of work I’m picking up is figuring out how to split the adaptation process into tighter and faster for new users vs developing slower and longer “interest” loops for habitual users.

Thanks for reading — see here for Part 1 and Part 2 of this series. If you’ve made it this far and you’re a builder interested in jamming on the AI/UX space, I’m happy to hear from you – you can reach me at skynetandebert at gmail dot com or @ajaymkalia on Twitter.